Team Members

Sylvester Benson-Sesay

Phuc Do

Gabriel Sarch

Supervisors

Ross Maddox, Ph.D., Biomedical Engineering, University of Rochester

Customers

Marlene Sutliff and Steven Barnett, UR Community/Deaf Wellness Center and Daniel Brooks, President HLAA NYS Association

Description

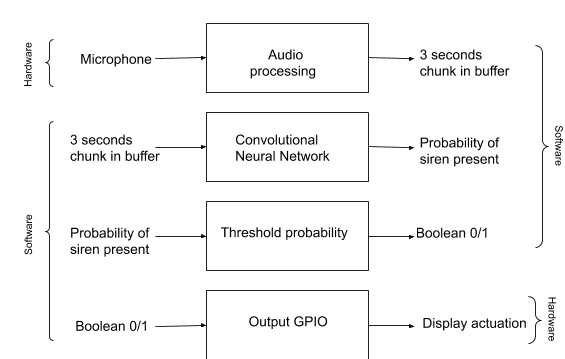

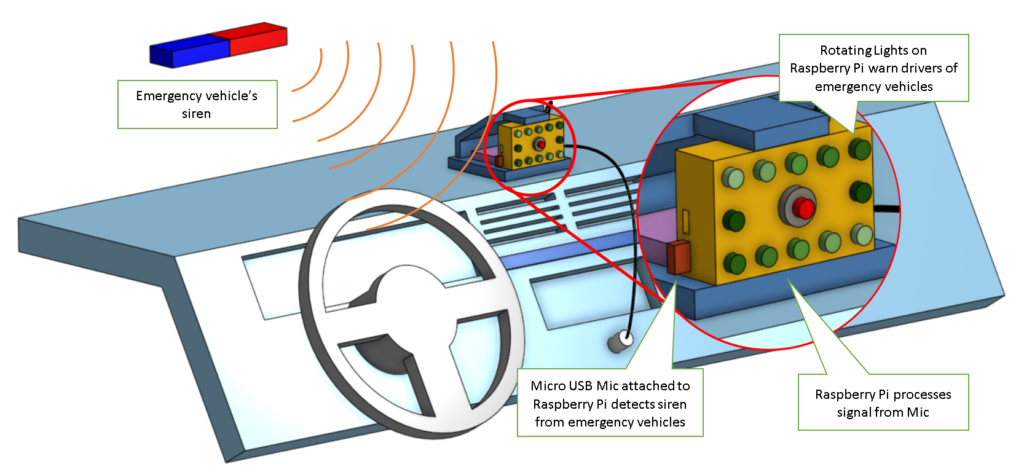

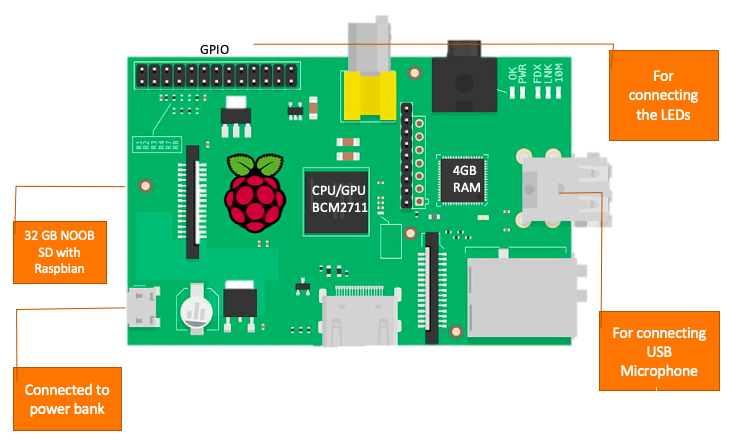

There is a need to ensure that drivers are alerted of approaching emergency vehicles so that they can remove themselves from the path of the emergency vehicle. It is especially a challenge for deaf, hard of hearing, and distracted drivers to identify emergency signals, which puts them at an increased risk for collision. People that are hard of hearing and deaf people are three times as likely to be involved in a motor vehicle accident and up to nine times as likely to be seriously injured in the accident (National Academy of Forensic Engineer, 2016). In this project, we developed a device for use in the car that detects emergency vehicles and notifies the driver of their presence in real-time. We trained a convolutional neural network to detect sirens in noisy environments. A demonstration of our real-time detector and design schematic is shown below.

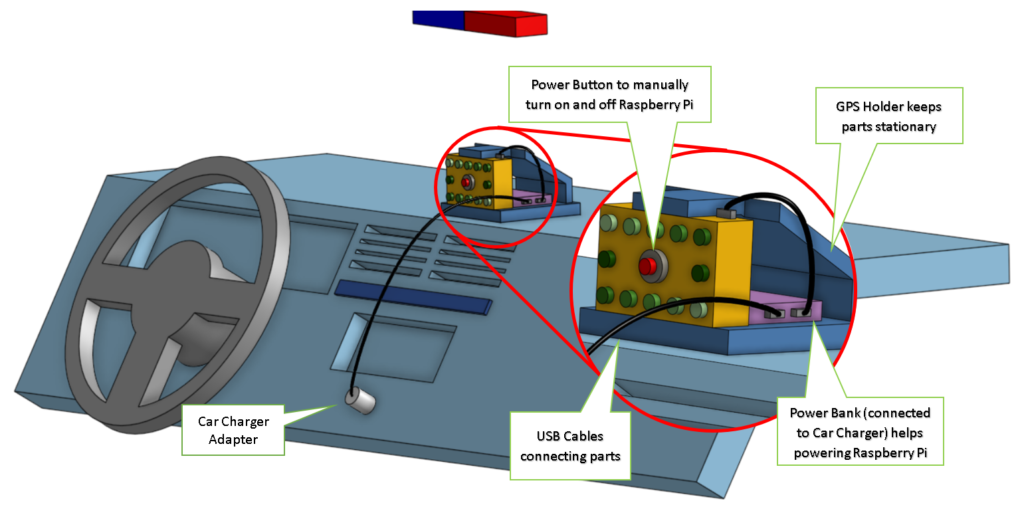

Assembly

Features

- Raspberry Pi 3

- USB Microphone

- Power Bank

- LED Light Case

- USB to Micro USB Cable

*Clipart Raspberry

Detection Software

Software Demonstration Video

Below is a video demonstrating our real-time detector while playing various types of noise.

Sample audio validation testing

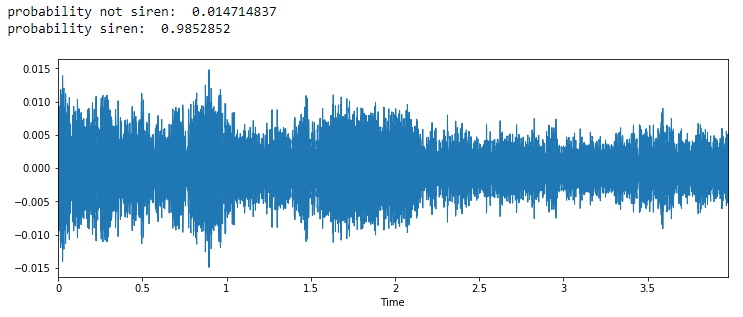

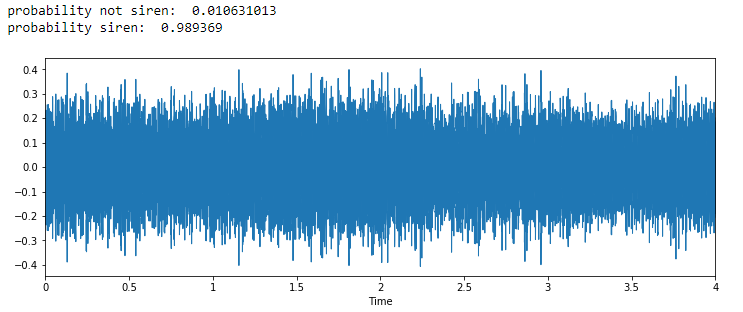

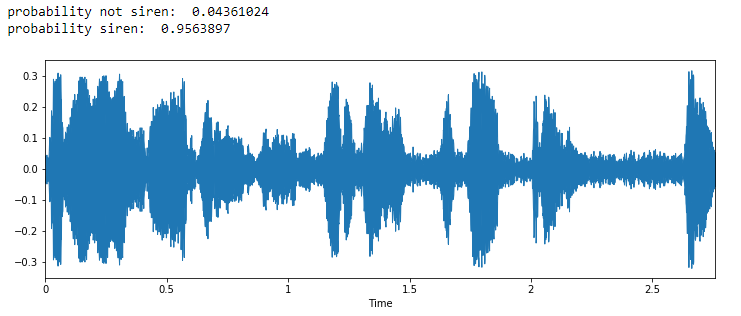

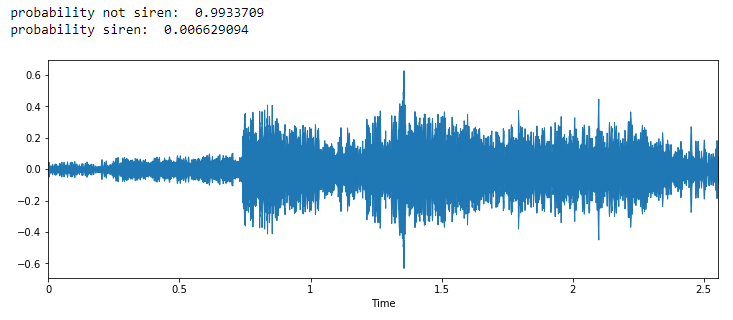

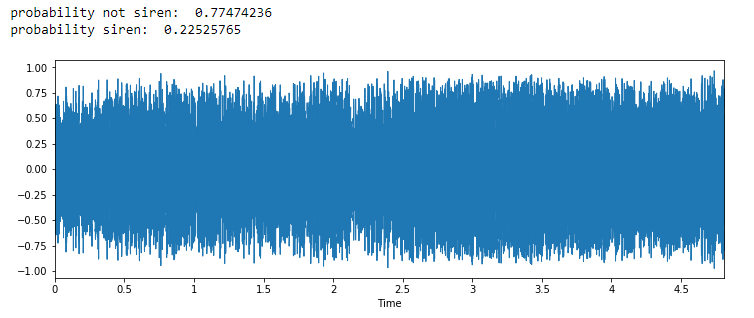

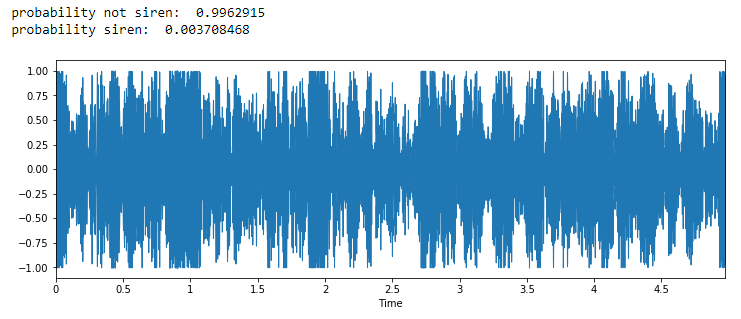

Below are some urban audio samples that we ran through our CNN. You can see the raw audio signal and output detection probabilities for each of the audio samples. Click the audio file to listen to the sample.

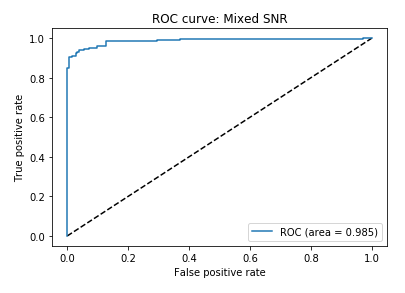

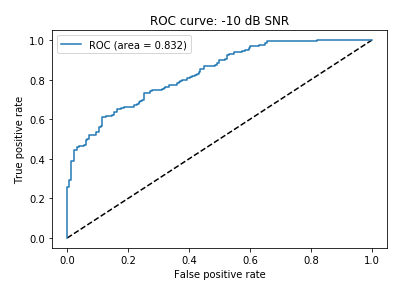

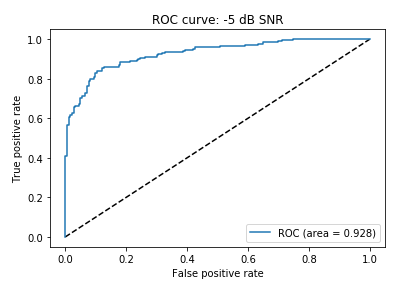

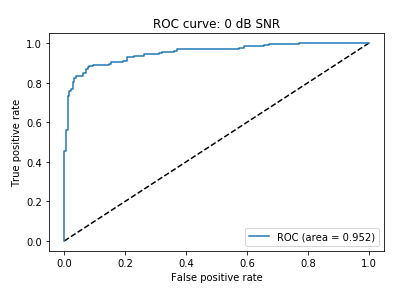

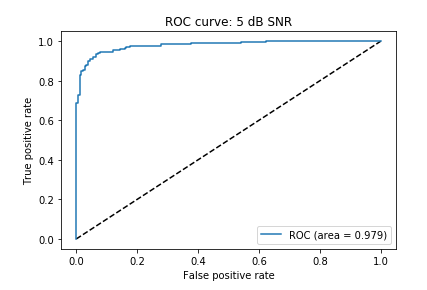

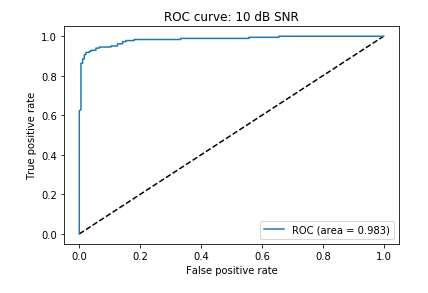

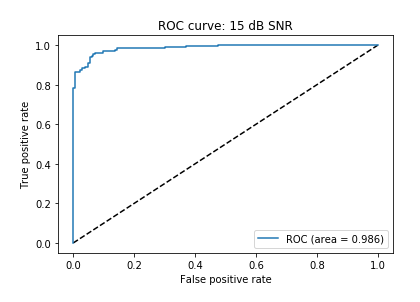

Receiving Operating Characteristic (ROC) Curves

ROC curves are a way to compare the false alarm rate versus the true positive rate for our validation set (held out 10% of data). The area under the curve (AUC – bottom right of the graph) is a measure of detection accuracy. An AUC of 1 is a perfect detector while an AUC of 0.5 is random chance detection. We computed the ROC for different signal-to-noise (SNR) ratio sirens by embedding the sirens in different levels of car and environmental noise.

Acknowledgment

Special thanks to Prof. Amy Lerner, Prof. Scott Seidman, Mrs. Marlene Sutliff, and Mr.Daniel Brooks for providing us with the opportunity to participate in a wonderful project.

Special thanks to Prof. Ross Maddox, Prof. Steven Barnett, Luke McConnaghy, and many others who helped us throughout these last two semesters. Without you guys, we wouldn’t have been able to complete our goals for the project.

Meet the Team

Sylvester Benson-Sesay

Phuc Do

Gabriel Sarch

Contact Information:

Sylvester: sbensons@u.rochester.edu

Phuc: pdo3@u.rochester.edu

Gabriel: gsarch@u.rochester.edu